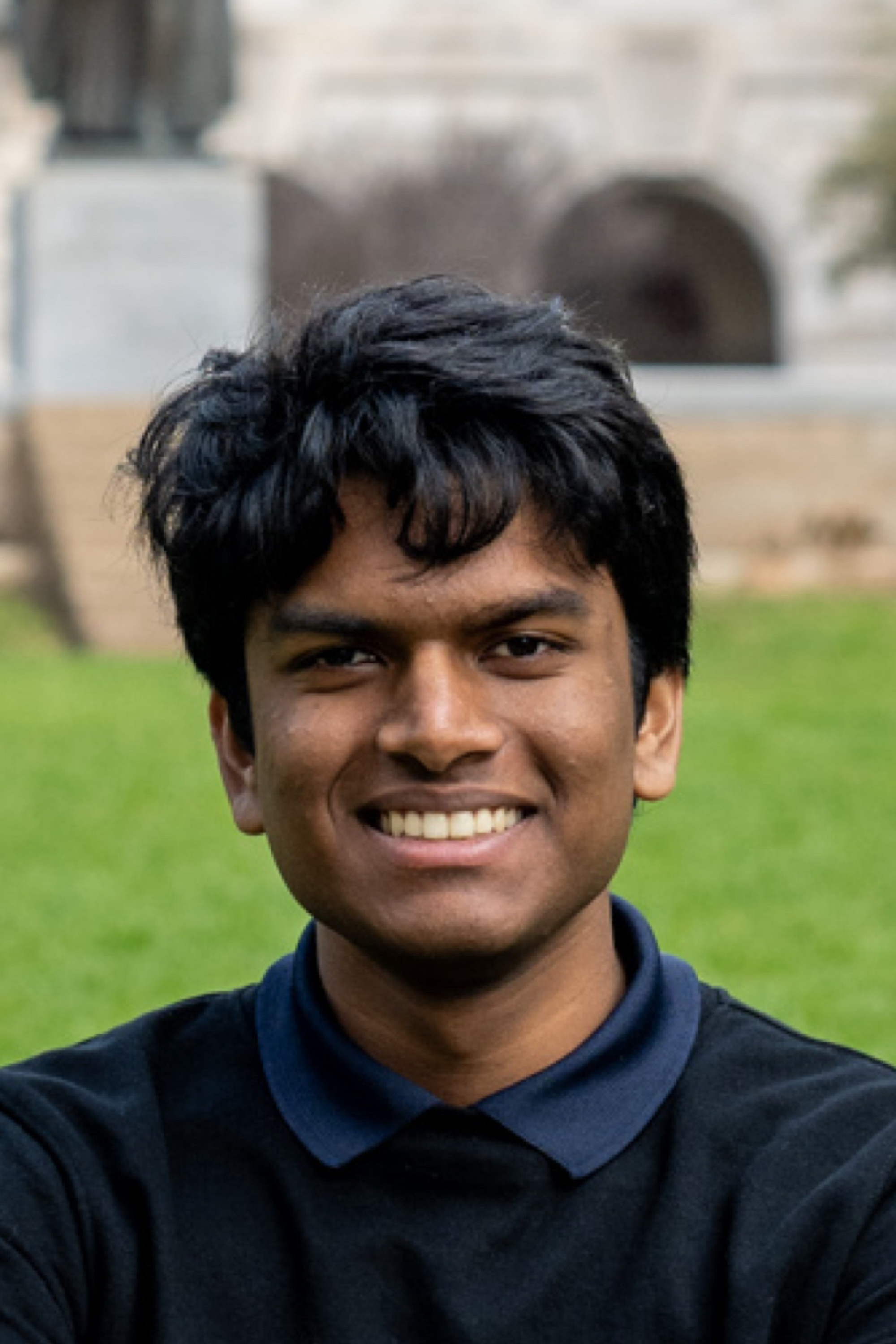

PhD Student

University of California, Berkeley

prasann [at] berkeley.edu PrasannS prasann_singhal semantic scholar

Hi, I'm Prasann! I'm a first-year PhD student at UC Berkeley, advised by Jacob Steinhardt and Sewon Min. I am supported by the NSF Graduate Research Fellowship.

My research focuses on Natural Language Processing and I'm broadly interested in better understanding how language models work, and improving them in domains with potential for positive societal impact. I've gotten to work on lots of cool stuff, from understanding RLHF, to improving decoding algorithm efficiency.

As an undergrad, I was extremely fortunate to be advised by the amazing Greg Durrett at UT Austin (I majored in CS and linguistics), and was supported by many awesome labmates and collaborators. Prior to research, I've also had the chance to participate in nearly 20 hackathons, and made a lot of fun projects.

I love meeting new people so free to send me an email if you'd like to chat about anything (e.g. related research, unrelated research, just want to say hi)! Likewise, if you're a high-schooler or undergrad anywhere interested in research or grad school, I'm always happy to give advice if you feel it'd be useful.

ChartMuseum: Testing Visual Reasoning Capabilities of Large Vision-Language Models

To CoT or not to CoT? Chain-of-thought helps mainly on math and symbolic reasoning

Adaptive Margin RLHF via Preference over Preferences

D2PO: Discriminator-Guided DPO with Response Evaluation Models code

A Long Way to Go: Investigating Length Correlations in RLHF code

EEL: Efficiently Encoding Lattices for Reranking code

Assessing Out-of-Domain Language Model Performance from Few Examples

[Apr. 2026] I was awarded the NSF Graduate Research Fellowship!

[Dec. 2024] Won the 2025 CRA Outstanding Undergraduate Researcher Award (awardee)!

[2023-2024] A Long Way to Go in RLHF @ IST & Unbabel Seminar ; SEAL Reading Group, Scale AI ; UT Austin LIN 393 Seminar ;

Scale AI Research Intern / Part-time Researcher. Summer-Fall 2024.

UT Austin TAUR Lab Undergraduate Research Assistant, Natural Language Processing. Fall 2021-Present.

UT CS 388 (NLP) TA (Fall 2024)

UT Austin Directed Reading Program Mentor (Spring 2024)

Founder / Teacher - Katy HACK Initiative: I spent 3 years starting/running CS education programs in local elementary / junior high schools

Volunteer Teacher - I spent a summer teaching English, Computer Fundamentals in a village in Gujarat